Robots fail in the real world not because the planner is wrong—but because testing is too slow, too expensive, and too “non-immersive.”

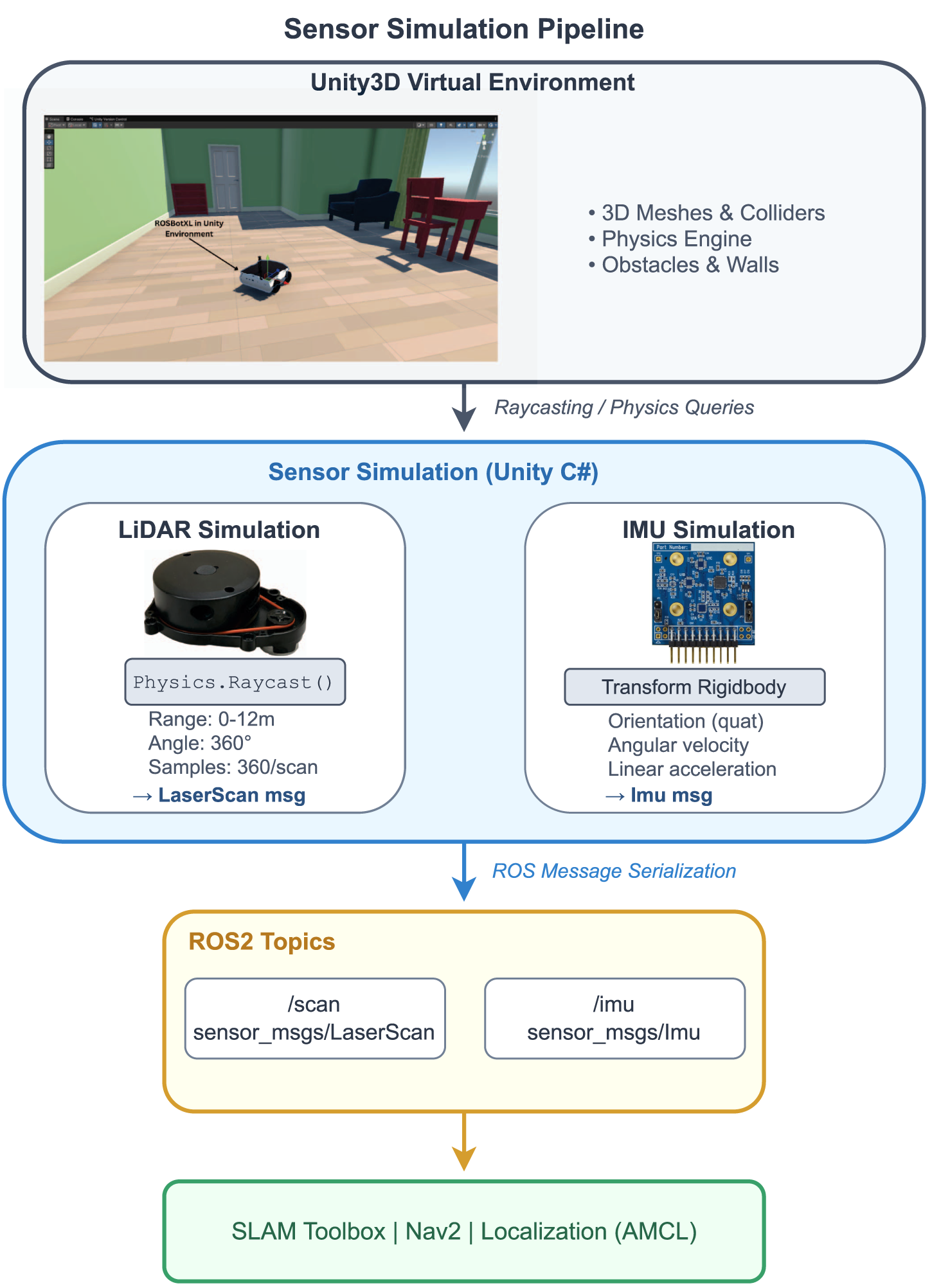

This open-access paper introduces SimNav‑XR, an extended-reality (XR) simulation workflow that bridges ROS2 and Unity3D so teams can develop mobile robot navigation with VR (fully virtual) and MR (passthrough overlay on real surfaces) in the same stack. It simulates LiDAR/IMU, streams ROS2 topics, and lets you “stand inside” the robot’s perception + SLAM loop.

One practical detail: their Unity↔ROS2 pipeline reports mean LaserScan latency of 12.4 ms for a 360‑point scan (3 KB) at 10 Hz—fast enough to iterate on Nav2/SLAM behaviors without the UI feeling sluggish.

Why it matters for industry: this is a reproducible path to shorten the sim→field cycle for AMRs (warehouse, hospitals, campuses) while keeping engineers and stakeholders aligned via immersive debugging.

How I’d pilot this in 10 business days

- Stand up one ROS2 Nav2 scenario + one Unity scene; define “no-collision waypoint success rate.”

- Mirror your sensor topics (scan/imu/odom/cmd_vel) and validate timing + latency budgets.

- Run 20 VR/MR sessions with engineers/operators; log failure modes and update maps/controllers daily.