Real robotics ML is bottlenecked by simulation throughput more often than by model size.

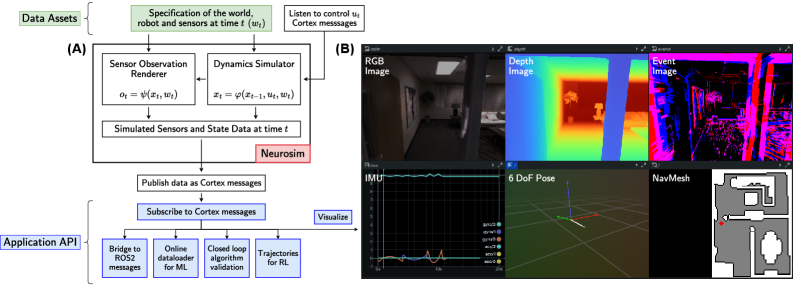

A recent arXiv paper introduces Neurosim, a high-performance simulator for neuromorphic robot perception. It supports event/DVS sensors, RGB cameras, depth sensors, IMUs, and agile multirotor dynamics in complex environments, while integrating cleanly with ML pipelines via a ZeroMQ messaging layer (“Cortex”).

The headline number they report is ~2700 FPS on a desktop GPU (peak). For engineering teams working on perception + control (especially closed-loop), this changes the cost of experimentation: more edge cases, faster ablation cycles, and tighter sim-in-the-loop debugging without waiting overnight for data generation.

How we’d pilot this in 10 business days

- Days 1–3: Integrate Neurosim/Cortex into the existing data + training loop; align sensor timestamps and message formats.

- Days 4–7: Benchmark throughput/latency end-to-end (sim → pipeline → trainer) and quantify “effective FPS” under real augmentations and logging.

- Days 8–10: Run a closed-loop experiment (baseline vs. sim-augmented) and compare task metrics and failure modes under controlled scenario sweeps.

Source

- Paper: https://arxiv.org/abs/2602.15018

- License: CC BY 4.0 — https://creativecommons.org/licenses/by/4.0/