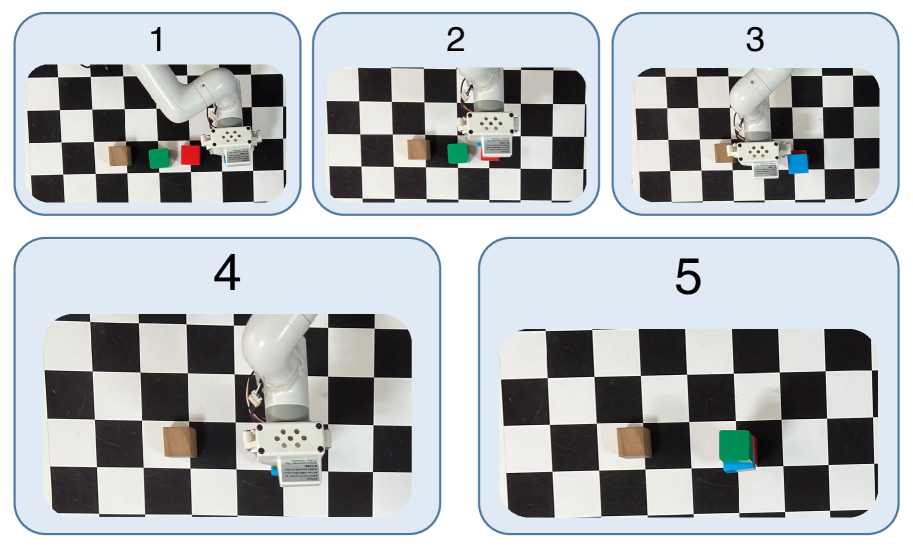

Robots often fail when executing free‑form instructions due to brittle open‑loop control and limited perception. MALLVI introduces a multi‑agent closed‑loop framework that orchestrates specialized perception, high‑level reasoning, planning, and feedback agents to iteratively interpret an image + language instruction and produce reliable manipulation actions. By coordinating Localizer, Decomposer, Thinker, and Reflector agents, MALLVI enhances environmental understanding and enables error detection and recovery, leading to stronger task generalization in both simulation and real experiments.

How I’d pilot this in 10 business days

- Integrate MALLVI’s multi‑agent pipeline with your ROS stack and perception modules.

- Collect representative image + instruction pairs in your workspace.

- Test and benchmark closed‑loop performance against your baseline control system.